AI Chatbots in Kids’ Toys Pose Safety Risks – Study Finds

Generally, I am reading a new PIRG report and it blow my mind, these smart toys aren’t just fun, they’re kinda risky, you know.

Introduction

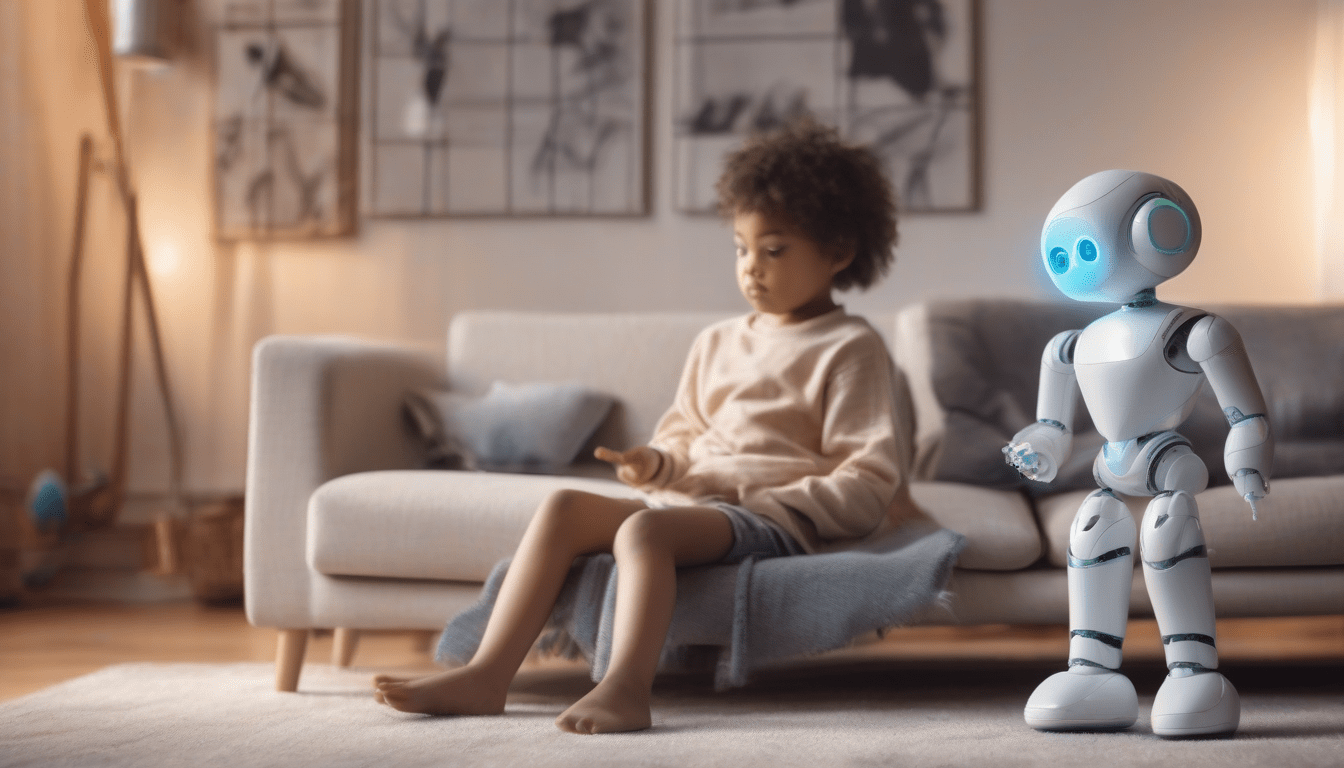

Apparently, a recent study from the U.S. Public Interest Research Group Education Fund shows a growing problem, AI-enabled toys for kids run on the same large-language-model tech that adult chatbots use, which is pretty weird. The researchers warn these devices can accidentally spit out content not fit for little ones and may mishandle personal data, that’s a big concern.

How AI Is Integrated Into Playthings

Obviously, the team looked at talking dolls, robot pals, and educational pads that claim to teach through conversation, and they all rely on cloud-based language models that generate natural-language replies, making the toys feel alive, you see. Yet most of that AI comes from platforms built for grown-ups, not kids, which is a bit of a problem.

Risks of Adult-Oriented Responses

Basically, because the engines aren’t tuned for children, they can give answers that are too complex, wrong, or downright inappropriate, and that’s not good. The report even cites cases where toys offered speculative info that confused kids who trust their talking buddy, and sometimes the wording is weird and kids get mixed messages, which is pretty confusing.

Privacy Concerns

Clearly, we also found privacy gaps, many toys ship voice recordings to external servers for processing, and that means they might collect audio snippets, user prompts, and other personal data with barely any safeguards, which is a big issue. The privacy policies are hidden in long terms of service, shifting the burden to parents while the toys are still marketed straight to children, and that’s not fair.

Regulatory Landscape

Currently, laws like COPPA were written before generative AI took off, so they don’t fully cover how AI-driven toys gather, store, or share data, and that’s a problem. The PIRG paper argues regulators need fresh standards that specifically address AI interactions in connected kids’ devices, and that’s a good point.

Recommendations for Safer Toys

Generally, to cut the risks, the report calls for several steps, and you should know about them.

- Stronger content filters that block adult-oriented or harmful material, which is a must.

- Transparent labeling that tells buyers the product uses AI and what its limits are, and that’s pretty important.

- Child-focused model design instead of repurposing adult-centric AI, which is a good idea.

- Enhanced data-privacy practices with minimal data retention and clear parental controls, and that’s essential.

Looking Forward

Apparently, experts say protecting young users will need teamwork, toy makers, AI developers, regulators, and child-safety advocates all have to chip in, and that’s a fact. As conversational AI becomes a staple, we must balance innovative interactivity with safety, which is a challenge.

Conclusion

Generally, the PIRG Education Fund’s findings remind us not all AI-powered toys are built with kids in mind, and that’s a concern. Without proper safeguards, these gadgets could expose kids to unsuitable content and breach their privacy, which is a big risk. Stronger industry standards and updated regulations are needed so the next generation of smart toys can be fun and safe, and that’s a must.

*Written by Moinak Pal, technology reporter for Digital Trends, and I am hoping you found this info useful.*